ATI's New High End and Mid Range: Radeon X1950 XTX & X1900 XT 256MB

by Derek Wilson on August 23, 2006 9:52 AM EST- Posted in

- GPUs

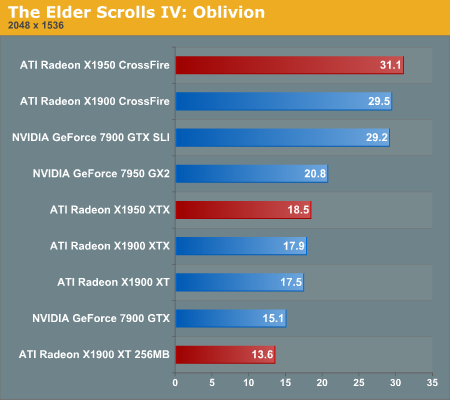

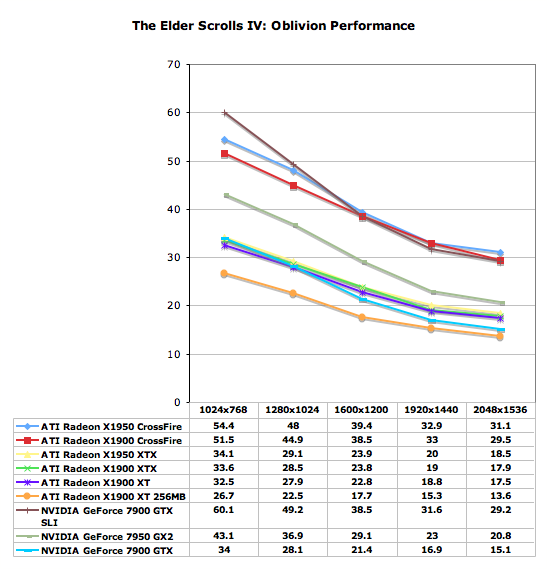

The Elder Scrolls IV: Oblivion Performance

While it is disappointing that Oblivion doesn't have a built in benchmark, our FRAPS tests have proven to be fairly repeatable and very intensive on every part of a system. While these numbers will reflect real world playability of the game, please remember that our test system uses the fastest processor we could get our hands on. If a purchasing decision is to be made using Oblivion performance alone, please check out our two articles on the CPU and GPU performance of Oblivion. We have used the most graphically intensive benchmark in our suite, but the rest of the platform will make a difference. We can still easily demonstrate which graphics card is best for Oblivion even if our numbers don't translate to what our readers will see on their systems.

Running through the forest towards an Oblivion gate while fireballs fly by our head is a very graphically taxing benchmark. In order to run this benchmark, we have a saved game that we load and run through with FRAPS. To start the benchmark, we hit "q" which just runs forward, and start and stop FRAPS at predetermined points in the run. While not 100% identical each run, our benchmark scores are usually fairly close. We run the benchmark a couple times just to be sure there wasn't a one time hiccup.

As for settings, we tested a few different configurations and decided on this group of options:

| Oblivion Performance Settings | |

| Texture Size | Large |

| Tree Fade | 100% |

| Actor Fade | 100% |

| Item Fade | 66% |

| Object Fade | 90% |

| Grass Distance | 50% |

| View Distance | 100% |

| Distant Land | On |

| Distant Buildings | On |

| Distant Trees | On |

| Interior Shadows | 95% |

| Exterior Shadows | 85% |

| Self Shadows | On |

| Shadows on Grass | On |

| Tree Canopy Shadows | On |

| Shadow Filtering | High |

| Specular Distance | 100% |

| HDR Lighting | On |

| Bloom Lighting | Off |

| Water Detail | High |

| Water Reflections | On |

| Water Ripples | On |

| Window Reflections | On |

| Blood Decals | High |

| Anti-aliasing | Off |

Our goal was to get acceptable performance levels under the current generation of cards at 1600x1200. This was fairly easy with the range of cards we tested here. These settings are amazing and very enjoyable. While more is better in this game, no current computer will give you everything at high res. Only the best multi-GPU solution and a great CPU are going to give you settings like the ones we have at high resolutions, but who cares about grass distance, right?

While very graphically intensive, and first person, this isn't a twitch shooter. Our experience leads us to conclude that 20fps gives a good experience. It's playable a little lower, but watch out for some jerkiness that may pop up. Getting down to 16fps and below is a little too low to be acceptable. The main point to bring home is that you really want as much eye candy as possible. While Oblivion is an immersive and awesome game from a gameplay standpoint, the graphics certainly help draw the gamer in.

Oblivion is the first game in our suite where ATI's latest and greatest actually ends up on top. The margin of victory for the X1950 CrossFire isn't tremendous, measuring in at 6.5% over the 7900 GTX SLI.

As a single card, the 7950 GX2 does better than anything else, but as a multi-GPU setup it's not so great. The 12% performance advantage at 2048 x 1536 only amounts to a few more fps, but as you'll see in the graphs below, at lower resolutions the GX2 actually manages a much better lead. A single X1950 XTX is on the borderline of where Oblivion performance starts feeling slow, but we're talking about some very aggressive settings at 2048 x 1536 - something that was simply unimaginable for a single card when this game came out. Thanks to updated drivers and a long awaited patch, Oblivion performance is no longer as big of an issue if you've got any of these cards. We may just have to dust off the game ourselves and continue in our quest to steal as much produce from as many unsuspecting characters in the Imperial City as possible.

Oblivion does like having a 512MB frame buffer, and it punishes the X1900 XT 256MB pretty severely for skimping on the memory. If you do enjoy playing Oblivion, you may want to try and pick up one of the 512MB X1900 XTs before they eventually disappear (or start selling for way too much).

In contrast to Battlefield 2, it seems that NVIDIA's 7900 GTX SLI solution is less CPU limited at low resolution than ATI's CrossFire. Of course, it's the higher resolutions we are really interested in, and each of the multi card options we tested performs essentially the same at 1600x1200 or higher. The 7950 GX2 seems to drop off faster than the X1950 XTX, allowing ATI to close the gap between the two. While the margin does narrow, the X1950 XTX can't quiet catch the NVIDIA multi-GPU single card solution. More interestingly, Oblivion doesn't seem to care much about the differences between the X1950 XTX, X1900 XTX, and X1900 XT. While the game does seem to like a 512MB of onboard memory, large differences in memory speed and small differences in core clock don't seem to impact performance significantly.

74 Comments

View All Comments

TigerFlash - Wednesday, August 23, 2006 - link

I suppose I worded that the opposite way. Do you think Intel will stop supporting Crossfire cards?michal1980 - Wednesday, August 23, 2006 - link

Can we not even get any numbers for cards below the 7900GTX.I understand your limited, but how about some numbers from some cards below that, to see what an upgrade would do.

I know we can kind of take test from old reviews of the cards, but your test bed has changed since core 2, so its not a fair heads to heads test of old numbers to new.

it would be nice to see if theres a point(wise or not) to upgrade from a 7800gt or that gen of cards, or something slower like a 7900gt.

but it seems like ever 'new gen' card test just drops off 'older' cards

michal1980 - Wednesday, August 23, 2006 - link

what i meant is that on the tables, or where all the new cards are, it would be nice to have some numbers for old cards.Lifted - Wednesday, August 23, 2006 - link

Agreed. I'm still running a 6800GT and have not seen much of a reason to upgrade with the current software I run. Perhaps if I saw that newer games are 3x faster I might consider an upgrade, so how about it?